AI-Native Engineering

for the Modern Enterprise

How AI agents are transforming the way software gets built

Harley Trung · CEO & Co-founder, CoderPush

Today's Agenda

- The evolution of AI-assisted development

- What are coding agents & how they work

- From specs to working software

- Two pillars for your team

- Great specs — for BAs & PMs

- Skills & agents — for developers

- What this means for Sun Group

What is Agentic Coding?

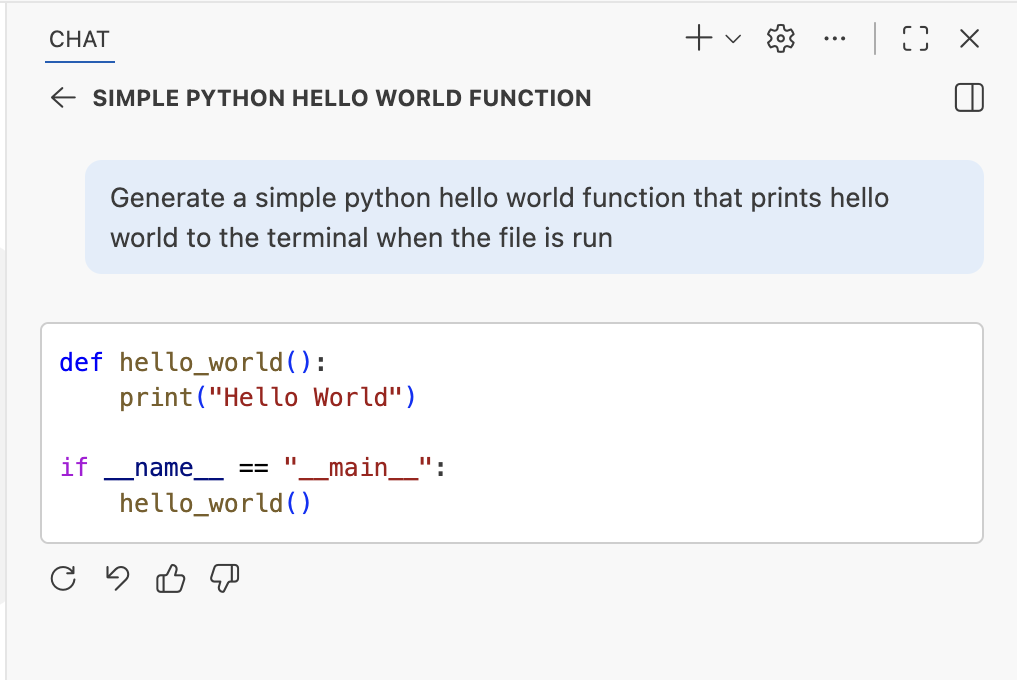

Code Completion

Autocomplete

Code Generation

LLM-Powered Code Generation

Agentic Coding

Autonomous AI in Your Workspace

Coding Agents

IDE

- Copilot in VS Code

- Cursor

- Windsurf

- Jetbrains

CLI

- Copilot CLI

- Claude Code

- Codex

- Kiro (AWS)

Web

- GitHub Copilot

- ChatGPT's Codex

- Claude

- Devin.ai

Custom

- Copilot SDK

- OpenAI Agents SDK

- LangChain's Deep Agents SDK

- Claude Agents SDK

What is an AI Agent?

A widely accepted definition today:

An AI Agent is an LLM that calls tools in a loop to achieve a goal.

The Agentic Loop

How coding agents think, act, and iterate

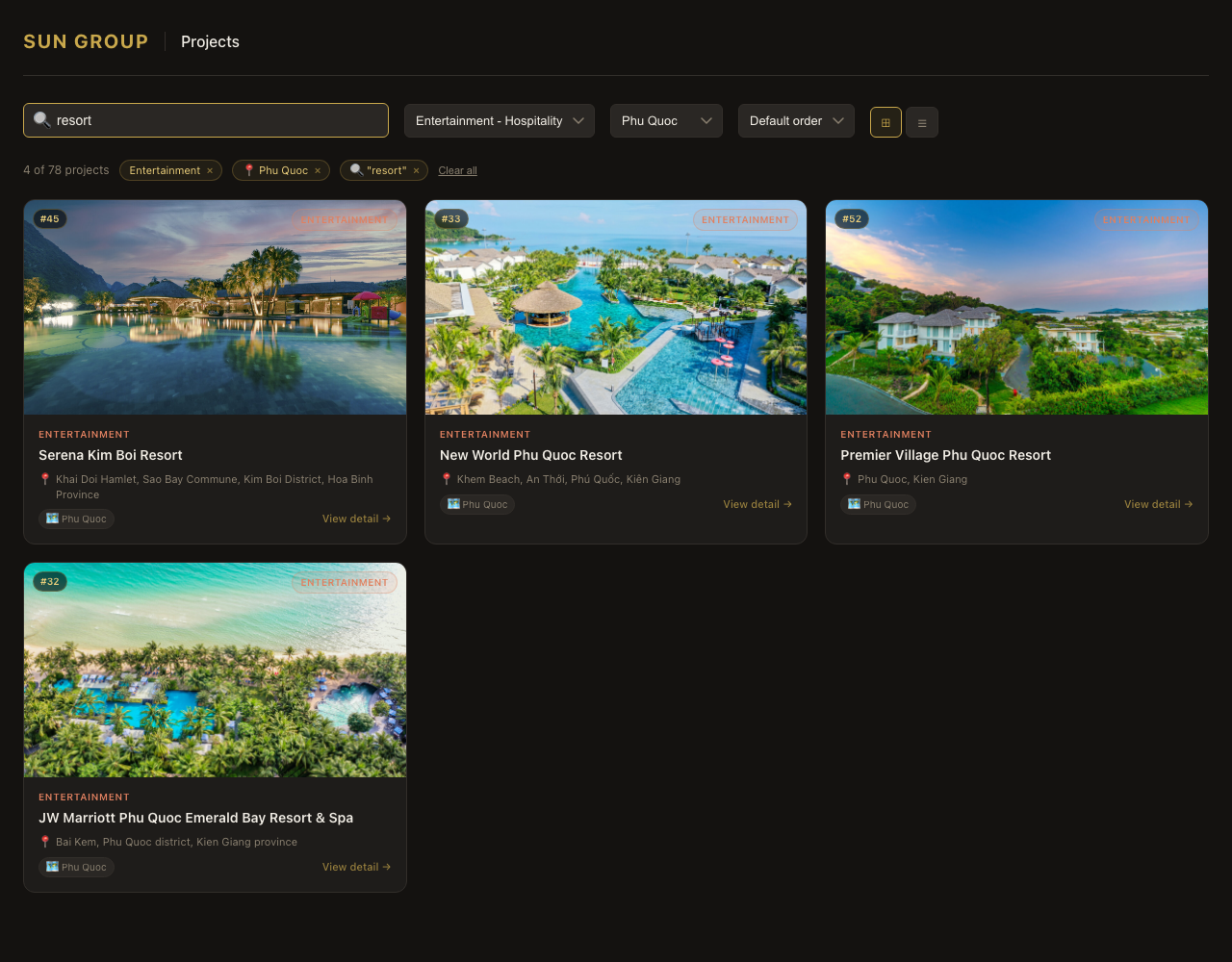

Use Case: Specs to Software

Rebuilding a Sun Group page with AI agents

The Full Pipeline

docx / xlsx

Spec

human

Issues

Codes

Review

human

This pipeline is similar whether you're building a project showcase, an internal tool, or a customer-facing application.

From Documents to Specs & Issues

Your BAs write Word docs and Excel sheets. Watch what happens when an AI agent processes them.

What you will see

- Input: one or more

.docx/.xlsxfiles - AI extracts, normalizes, and decomposes into focused specs

- Human reviews and approves

- AI generates GitHub / Jira Issues from approved specs

Minutes, not days.

Consistent format every time.

Decompose, Don't Just Convert

The relationship from input documents to specs is never 1:1. Specs should be organized by delivery boundary, not by source document.

Input

SunGroup-BRD.docx

Output — 4 focused specs

spec-project-discovery-behavior.mdspec-project-data-contract.mdspec-filtering-and-sorting-logic.mdspec-quality-and-acceptance.md

Every requirement traces back to its source via a traceability index. Not spec-v2-final. Not requirements-copy-3. Names that stay stable and mean something to every role on the team.

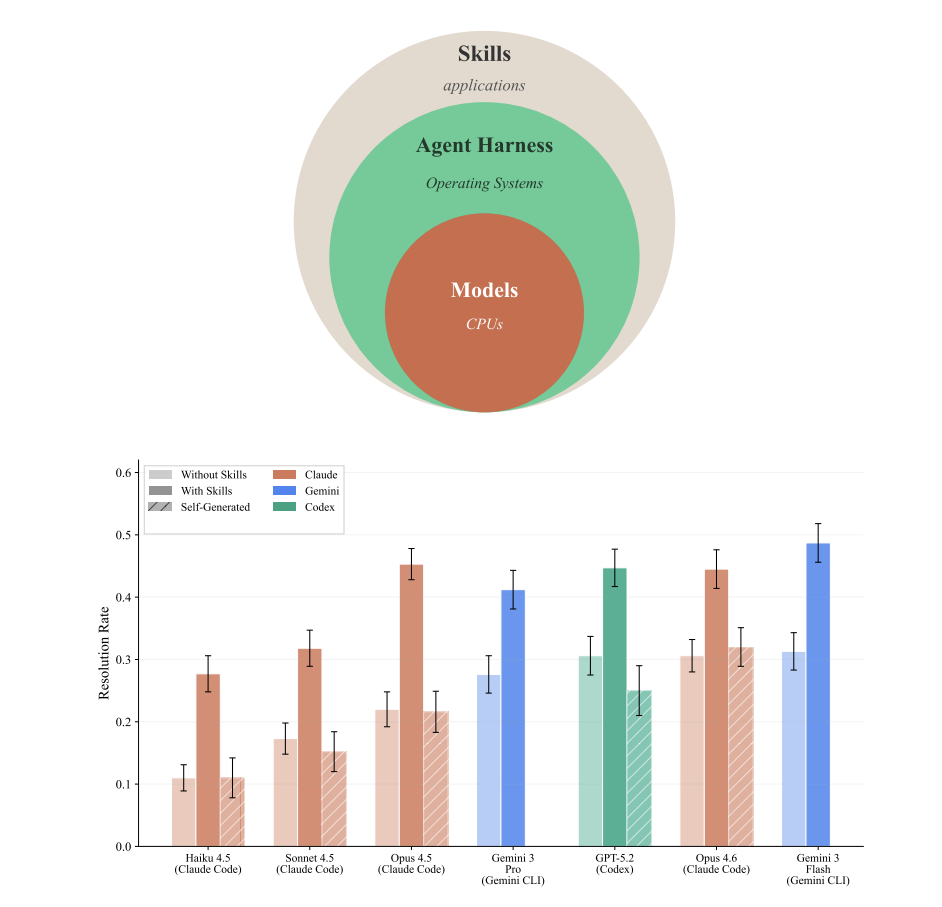

From Issues to Working Code

An agent picks up an issue, reads the spec, and builds the feature — with skills to accelerate it.

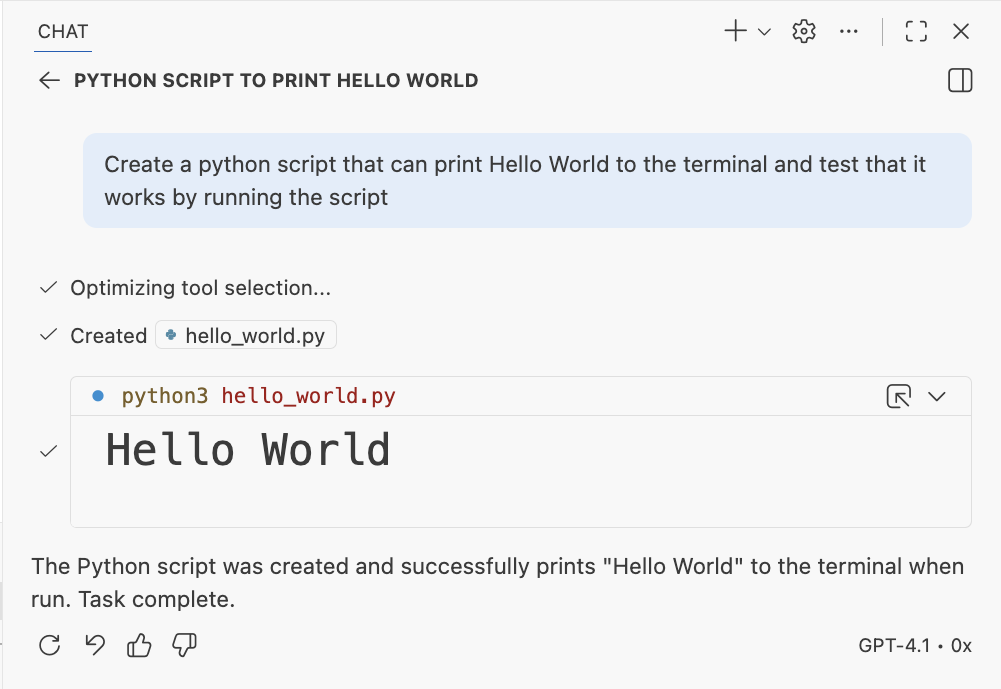

Without Skills

The agent writes generic code. It might use outdated patterns, miss best practices, or produce something that needs heavy refactoring.

More iteration cycles

With Curated Skills

The agent reads skill files first — learning your team's patterns, tech stack, and standards. It produces code that matches your architecture from the start.

Production-ready faster

Sun Group Project Showcase

[ LIVE DEMO ]

Switch to browser to show the finished application

Best Practices

Context

Curated Skills

Curated Skills (beige) improve performance by +16.2pp on average; self-generated Skills (amber) provide negligible or negative benefit.

Figure 1. Agent architecture stack and resolution rates across 7 agent-model configurations on 84 tasks. — arxiv.org/html/2602.12670v1

Context

agents.md

Developer-provided files only marginally improve performance (+4% on average), while LLM-generated context files have a small negative effect (−3%). Context files increase costs by over 20%.

These observations are robust across different LLMs and prompts. — arxiv.org/html/2602.11988v1

Context

Prompting: Explicit is better than implicit

- Reference specific files to show example code

- Describe the expected output format

- Break complex tasks into smaller, focused prompts

- Include constraints and edge cases up front

The more precise your prompt, the better the agent's first attempt — saving iteration cycles.

Verification

Unit Tests

Validate individual functions and modules produced by the agent.

Traces

Inspect agent reasoning and tool calls to understand its decisions.

Functionality Tests

End-to-end checks that the feature works as intended.

Real Browser Validation

Run the shipped app in a real browser, exercise key flows, and document the result in issue #1. That gives stakeholders a concrete artifact even when there is no separate PR.

Final deck step: attach this screenshot to the GitHub issue, then capture the issue page itself if you want the slide to show the GitHub artifact instead of the raw browser view.

Version Control

- Commit often — create checkpoints as the agent works

- Maintain documentation — keep READMEs and docs in sync

- Be mindful about more PRs created — review agent-generated code carefully

What This Means

for Sun Group

The Impact

Faster prototyping

from spec to working code

Reduction in spec-to-issue

handoff time

Scales with your team —

agents don't burn out

These aren't hypothetical. This is what we see across our engineering teams today.

Two Pillars for Your Team

Pillar 1: AI-Ready Specs

For BAs, PMs, and product teams

- Structured requirement templates

- Document-to-spec conversion

- Automated issue generation

- Quality gates before handoff

Pillar 2: AI-Native Development

For developers and tech leads

- Curated skill libraries

- Agent configuration and setup

- AI-assisted code review

- 20% time for AI training

What CoderPush Delivers

Training Playbook

Hands-on workshops for BAs, PMs, and developers. Your team learns by building real features with AI agents.

Custom Skill Library

Curated skills tailored to your codebase, architecture, and workflows. Agents that know your standards.

Spec Framework

Templates that turn your existing document workflows into agent-ready specifications.

Let's Build Together

The best time to adopt AI-native engineering was yesterday.

The second best time is today.

What We Covered

- Code completion → generation → agentic coding

- Live demo: documents to deployed application

- Best practices: context, verification, version control

- Two pillars: AI-ready specs + AI-native development

Harley Trung

CEO & Co-founder, CoderPush · coderpush.com